The hiring process usually looks broken long before anyone admits it. Roles stay open, interview loops keep growing, hiring managers blame talent shortages, recruiters blame indecision, and strong candidates disappear somewhere between screening and offer. In technical hiring, that pattern gets expensive fast.

Many organizations do not face just one hiring challenge. They encounter a stack of smaller failures: vague intake, weak sourcing, inconsistent interviews, slow feedback, and no shared definition of what a strong hire looks like. For AI, cybersecurity, DevOps, and quant roles, those failures compound because the market doesn’t wait for internal confusion to clear up.

A better approach to how to improve hiring process starts with treating hiring like an operating system. Every stage needs a clear owner, a measurable output, and a decision rule. That’s the playbook used to repair broken hiring funnels for technical teams that need speed without lowering the bar.

Table of Contents

- Diagnose Your Current Hiring Funnel

- Set Meaningful Hiring KPIs

- Redesign Your Sourcing and Screening

- Implement Structured Interviews and Assessments

- Optimize Workflows and Collaboration

- Build Your Implementation Roadmap

- Frequently Asked Questions About Hiring Process Improvement

Diagnose Your Current Hiring Funnel

Most hiring teams jump straight to solutions. They rewrite the job post, buy another sourcing tool, or add more interviewers. That usually makes the process noisier, not better. The first move is to inspect the funnel stage by stage and identify where the delay or quality loss starts.

A working diagnosis has to separate symptoms from causes. “We aren’t getting good candidates” might mean the intake was vague, the screening questions were generic, or the panel couldn’t agree on what good looked like. “Candidates keep dropping out” often traces back to slow follow-up, unclear process communication, or interview loops that ask the same thing twice.

Map the real funnel, not the ideal one

Start with the actual path a candidate takes today. Don’t use the process map from an old recruiting deck. Pull recent requisitions and document what happened in practice.

Track these stages in order:

- Application or source entry: Where the candidate came from and what signal qualified them for outreach.

- Recruiter screen: Whether the screen had pass criteria or relied on general impressions.

- Manager review: How long resumes sat before a decision.

- Assessment stage: What test, exercise, or take-home was used, and who reviewed it.

- Interview loop: How many rounds happened, who attended, and where duplicate questioning showed up.

- Offer and close: How long approvals took and whether compensation alignment happened early enough.

Practical rule: If the team can’t explain why a candidate moved forward or got rejected at each stage, the funnel isn’t being managed. It’s being improvised.

A quick funnel map also reveals where candidate communication breaks. Technical candidates won’t tolerate silence for long, especially when they’re interviewing elsewhere. Teams dealing with drop-off should also look at common communication gaps that contribute to candidate ghosting and how to prevent it.

Find the bottleneck by role family

A common mistake is using one process for every technical hire. That’s manageable for broad hiring classes and destructive for specialized ones.

Quant and HFT recruiting make that obvious. Mainstream tech workflows often fail for ultra-low latency roles because the screening model is built for general software hiring, not market-making logic, low-level performance constraints, or real-time risk systems. Workfully’s hiring process analysis notes that time-to-hire for quant researchers and ML engineers is 40% longer than for standard software developers, averaging 45 days versus 32 days, and 67% of prop shops cite mismatched pipelines as a top barrier. The same source states that niche tools such as QuantNet can reduce drop-off by 25% in HFT hiring benchmarks.

That doesn’t mean every firm needs a separate hiring machine for every title. It does mean role families need different screens, different sourcing channels, and different interviewer calibration. A DevOps process can’t be copied onto a quant researcher search and expected to work.

Run a fast audit this week

A useful audit doesn’t need a quarter of analysis. It needs one working session with recruiting, the hiring manager, and one interviewer from the last closed role.

Use this checklist:

- Pull five recent requisitions. Include one filled role, one stalled role, and one role that lost a finalist.

- Mark every delay. Resume review lag, interview reschedules, score submission lag, offer approval lag.

- Compare source quality. Look at which channels produced interviews, not just applications.

- Review rejection reasons. If the reasons sound inconsistent or vague, the evaluation criteria aren’t stable.

- Segment by role type. Separate broad engineering roles from high-specialization searches.

The outcome should be blunt. One team may discover sourcing isn’t the issue at all. Another may learn the actual blocker is a hiring manager who takes too long to review resumes. Another may find that assessments are filtering out strong candidates because they test the wrong skills.

That’s the point of diagnosis. It gives the team a place to intervene with precision instead of adding more process everywhere.

Set Meaningful Hiring KPIs

Many hiring dashboards are crowded and useless. They track activity, not outcomes. A better system uses a small set of measures that force better decisions and expose failure early.

Track the three signals that matter

The strongest KPI set for technical hiring usually comes down to time-to-fill, quality-of-hire, and candidate experience. Each one answers a different operational question.

Time-to-fill shows whether the process moves fast enough for the market the team is hiring in. It’s the clearest signal of operational drag. If this number rises, the team should inspect approvals, resume review time, scheduling friction, and offer turnaround before blaming talent supply.

Quality-of-hire answers the harder question: did the process select someone who performs well in the actual job? This metric matters more than application volume, response rate, or total interviews completed. A hiring process that moves quickly but produces weak hires isn’t efficient. It’s just fast failure.

Candidate experience captures whether strong people want to stay in process. This isn’t about being nice for its own sake. Clear timelines, prepared interviewers, and decisive communication directly affect close rates and employer reputation.

A hiring process can be busy and still be ineffective. The right KPI set exposes that quickly.

Use formulas people can actually maintain

The KPI formula matters less than consistency. If a team picks a method it can’t update without manual cleanup across five systems, it won’t last.

A practical setup looks like this:

| KPI | Simple formula | What to include |

|---|---|---|

| Time-to-fill | Requisition open date to accepted offer date | Track by role family, not one company-wide average |

| Quality-of-hire | Manager satisfaction + ramp performance + retention status | Use a simple internal score after onboarding milestones |

| Candidate experience | Post-process feedback from interviewed candidates | Ask short questions on clarity, speed, and professionalism |

A few operating rules make these KPIs useful:

- Define start and end dates once: Recruiting and finance often count time differently. Pick one definition and stick to it.

- Separate role categories: A cloud engineer, a healthcare IT leader, and a quant researcher shouldn’t be forced into the same benchmark.

- Use manager inputs sparingly: Long evaluation forms don’t get completed. Short ratings with clear criteria do.

- Review monthly, not continuously: Weekly KPI debates often create noise. Monthly reviews produce cleaner decisions.

For quality-of-hire, the simplest internal method is usually a weighted score based on manager evaluation, role ramp progress, and retention checkpoint status. The exact weighting can vary by company. What matters is that the team defines it before hiring, not after the hire turns out well or poorly.

Candidate experience should also stay simple. Ask candidates whether the process was clear, whether interviewers seemed aligned, and whether communication stayed timely. Those answers often reveal process weaknesses faster than internal meetings do.

Teams that want to improve hiring outcomes should resist vanity metrics. Application counts can go up while hire quality goes down. Interview volume can rise while the funnel slows. Good KPIs don’t flatter the process. They pressure it.

Redesign Your Sourcing and Screening

Most hiring problems don’t begin in the interview. They begin upstream with weak sourcing and vague screening. If the top of funnel is filled with the wrong people, the rest of the process becomes expensive sorting.

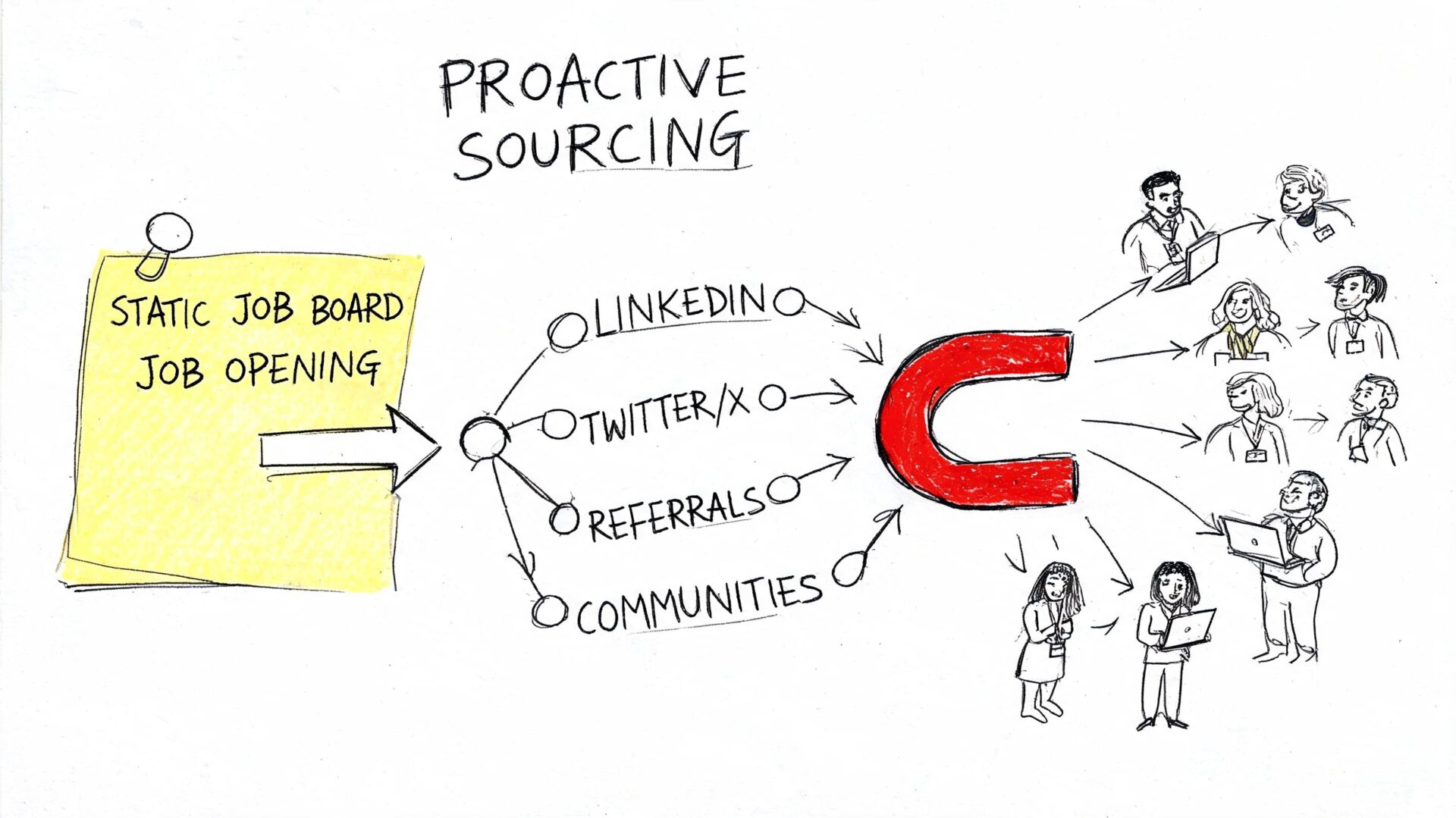

Stop posting and hoping

Job boards still have a place, but they shouldn’t be the center of the strategy for specialized technical hiring. High-skill candidates often come through direct outreach, communities, internal networks, and referrals. That’s especially true when the role requires scarce technical depth and strong judgment.

Referral programs are one of the clearest examples. TalentMsh recruiting trend data states that employee referrals generate just 7% of all applicants but account for nearly 30% of hires. The same source notes that referral hires are brought on 55% faster than candidates from other sources, and that companies with strong employer branding see a 50% reduction in cost-per-hire from the industry average of $4,700.

Those numbers matter because they point to a sourcing truth many teams resist. More applicants don’t automatically mean a healthier funnel. Better-matched applicants do.

A stronger sourcing mix for technical roles usually includes:

- Direct outreach: Search GitHub, LinkedIn, technical forums, and niche communities for evidence of relevant work.

- Employee networks: Ask current engineers who they’ve worked with under real delivery pressure.

- Specialized communities: Use role-specific spaces when the skills are narrow.

- Past silver medalists: Revisit strong candidates who were previously passed over for timing or fit, not quality.

Make referrals operational

Referral programs fail when they’re informal. “Send good people our way” isn’t a system. It’s a wish.

A workable referral process needs structure:

- Clear target roles: Tell employees exactly which openings matter most and what strong fit looks like.

- Simple submission path: Reduce friction. If the intake form is clunky, referrals won’t happen. Teams looking to clean up top-of-funnel data can also streamline lead capture with Orbit AI so referred and inbound candidates enter the process in a consistent format.

- Fast recruiter response: Employees stop referring when names vanish into a black box.

- Feedback loop: Let the referring employee know whether the candidate progressed and why.

The strongest referral programs don’t reward volume. They reward judgment.

There’s also a quality control issue in screening. Recruiters should review referred profiles against role requirements before pushing them to the manager. That protects the manager’s time and keeps employee trust intact.

Fix job descriptions before adding more spend

A bad job description weakens sourcing even if the recruiter is doing everything else right. Most technical job descriptions are bloated requirement lists with no signal about impact, environment, or what success looks like.

A strong description does three things well:

| Weak version | Better version |

|---|---|

| Lists every tool the team has touched | Focuses on the tools and problems the hire will actually own |

| Uses generic phrases like “rockstar” or “fast-paced” | Describes the work in concrete business and technical terms |

| Buries the mission under compliance language | Opens with why the role exists and what it needs to deliver |

A cleaner structure is usually:

- Why the role exists.

- What this person owns in the first stretch of the job.

- Which skills are required.

- Which skills are nice to have but not essential.

- How the interview process works.

Hiring teams that need a reset can use practical guidance on how to write effective job descriptions that convert. The biggest improvement often comes from removing noise, not adding copy.

This is also the right place to mention tools and partners selectively. Options such as Greenhouse, Lever, Ashby, and Nexus IT Group can support sourcing and resume review for specialized technical roles, but no tool or partner fixes a fuzzy role definition. Better inputs still drive better outputs.

Implement Structured Interviews and Assessments

Technical hiring breaks when interviewers rely on memory, instinct, or personal chemistry. Those methods feel efficient in the moment and create inconsistent decisions over time. Structured interviews solve that by turning interviews into comparable evaluations instead of loosely related conversations.

Build the scorecard before the interview

The scorecard comes first. Not after the recruiter kickoff. Not after the first candidate. Before anyone starts interviewing.

Success Performance Solutions cites a meta-analysis of 85 years of research showing structured interviews predict job performance with an r=0.81 validity coefficient versus 0.38 for unstructured interviews, and says structured methods can reduce bad hires by 50%. The same source notes that in tech, Google’s re:Work protocol yielded a 20-30% hire quality uplift, while IT staffing agencies report 25% faster time-to-hire. It also warns that 74% of technical evaluations lack scoring, leading to 40% inconsistent decisions.

That set of findings explains why so many interview loops feel busy but produce shaky outcomes. Without scoring, every interviewer invents a private standard.

A practical implementation model looks like this:

- Define the competencies for the role.

- Weight them based on job importance.

- Assign interviewers to specific competencies.

- Create question sets tied to those competencies.

- Require written evidence for every score.

For a Site Reliability Engineer, competencies might include systems design judgment, incident response, automation depth, communication under pressure, and operational ownership. For a quant developer, the categories would shift toward low-latency engineering, numerical reasoning, market systems understanding, and code precision.

Use a simple scorecard template

A scorecard doesn’t need to be elaborate. It needs to be specific enough that two interviewers looking at the same answer would score it in roughly the same range.

| Component | Description | Example Metric (SRE Role) |

|---|---|---|

| Core competency | The capability being measured | Incident response judgment |

| Question prompt | Standardized question asked to each candidate | Describe a production outage you led and how you restored service |

| Evidence anchors | What weak, acceptable, and strong answers look like | Weak: vague ownership. Strong: clear triage, prioritization, and prevention steps |

| Scoring scale | Consistent rating method | 1 to 5 based on evidence quality |

| Weight | Relative importance to the role | Higher weight on reliability and systems thinking |

| Interview owner | Person responsible for assessing that area | Hiring manager or senior SRE |

| Notes field | Written proof behind the score | Specific examples from the candidate’s answer |

Another operational choice matters here. Keep interview panels focused. A bloated panel often creates duplication and weak accountability. Each interviewer should know exactly what they’re evaluating before the call starts.

Train interviewers to score evidence, not chemistry

Good structured interviews still fail when interviewers haven’t been trained to use them. Calibration matters.

A short interviewer training should cover:

- Role brief: What problem the team is hiring to solve.

- Score interpretation: What distinguishes a 2 from a 4.

- Bias checks: How similarity bias shows up in technical interviews.

- Evidence standards: Notes should capture observed proof, not vague impressions.

- Debrief discipline: Interviewers submit scores before group discussion.

“Would this person be enjoyable to work with?” is not a valid hiring criterion unless the role explicitly requires a defined collaboration behavior and the team can measure it.

Structured assessments should also be relevant. Generic brainteasers and trivia-heavy quizzes don’t tell much about job performance. Better options mirror the work: architecture reviews, debugging exercises, code walkthroughs, incident retrospectives, or case discussions tied to the role.

For remote and coordination-heavy positions, interviewer preparation matters just as much as the question set. Teams refining virtual interviews may find useful prompts in this guide on how to interview remote support, especially for evaluating communication, responsiveness, and working style across distributed environments.

The final discipline is documentation. Store scorecards in a shared system such as Lever, Greenhouse, or Ashby and require completion before debrief. If a score can’t be backed by evidence, it shouldn’t drive the decision.

Optimize Workflows and Collaboration

Broken workflows usually get blamed on tools. In practice, the deeper issue is ownership. If nobody knows who decides, who responds, and by when, the ATS becomes a record of confusion rather than a control system.

Use the intake meeting as the control point

The intake meeting is where most process quality is won or lost. If recruiter and hiring manager leave that meeting with different assumptions, the funnel starts drifting on day one.

A useful intake agenda includes:

- Business context: Why this role is open and what happens if it stays open.

- Must-have capabilities: Essential requirements, stripped of wishlist noise.

- Target profile: Where this person likely comes from.

- Assessment plan: What evidence will prove fit.

- Interview ownership: Who evaluates which competencies.

- Decision timeline: Resume review SLAs, interview windows, debrief timing, offer path.

This meeting should produce written output, not just verbal alignment. Put the scorecard, sourcing targets, and process timeline into the ATS or shared workspace immediately.

Configure the workflow around decisions

Most ATS setups track statuses. Better ones track decision points.

That means building the workflow so the system prompts the next action and shows when the process is stalled. Useful examples include resume review reminders, scorecard completion tasks, rejection communication templates, and visibility into pending approvals. Teams evaluating stack options can compare systems and categories in this roundup of top recruitment tools.

A few workflow rules make a major difference:

- Close loops quickly: Don’t wait for the full panel to finish before sharing known blockers.

- Use templates where consistency matters: Candidate communication, intake forms, and debrief notes should not be rewritten from scratch every time.

- Keep stages meaningful: If the ATS has too many statuses, people stop using them accurately.

- Audit handoffs: Most delays happen between people, not inside tasks.

Teams looking for broader operational ideas beyond recruiting can borrow from general workflow design. This practical guide on strategies to improve team workflow efficiency is useful because it focuses on reducing handoff friction and creating clearer ownership.

Treat confidential hiring differently

Confidential searches need tighter collaboration than standard hiring, not looser coordination. That’s especially true in healthcare and government IT, where public postings can create compliance risk. iProspectCheck’s overview of hiring tech talent notes that in Q1 2026, 55% of CIOs in regulated industries extended time-to-hire by 20% after AI ethics mandates, and says stealth sourcing and confidential processes show 30% higher retention.

That kind of search changes the workflow requirements. The recruiter and hiring manager need sharper calibration on target companies, disclosure limits, outreach language, and candidate handling. A sloppy confidential process can create brand risk, compliance problems, and a weak candidate experience all at once.

Confidential hiring works when recruiter and manager operate as one unit. It breaks when either side assumes the other is filling in the gaps.

The same lesson applies to standard hiring. Tools help. Process maps help. But the strongest improvement usually comes from making collaboration explicit, documented, and time-bound.

Build Your Implementation Roadmap

A full hiring overhaul usually fails when teams try to change everything at once. The cleaner approach is a phased rollout with one clear objective per month.

Days 1 through 30

Start with visibility and alignment.

- Audit the current funnel: Pull recent roles and map delays, drop-off points, and inconsistent decision moments.

- Define KPI formulas: Lock in how time-to-fill, quality-of-hire, and candidate experience will be measured.

- Run intake resets: For open roles, hold a fresh recruiter-manager intake and rewrite must-haves.

- Trim the interview loop: Remove duplicate rounds and unclear panel roles.

The main output for this first phase is operational clarity. Everyone should know the target profile, the interview plan, and the timeline for decisions.

Days 31 through 60

Pilot the new evaluation model on one role that matters.

Choose a role that is visible enough to matter but not so politically sensitive that the team becomes afraid to test new process. Build a scorecard, assign interviewer ownership, and require written scoring before debrief.

Use this period to inspect friction:

- Are interviewers using the scorecard correctly?

- Are hiring managers submitting feedback on time?

- Is the assessment tied to the work?

- Are candidates getting a clear view of the process?

This phase should produce one repeatable interview package that the team can adapt to similar roles.

Days 61 through 90

Once evaluation is stable, improve the front end of the funnel.

Refresh job descriptions for open technical roles. Formalize referral outreach. Tighten the inbound application experience. Review sourcing channels and cut the ones that generate noise without producing quality interviews.

End the quarter with a working operating cadence:

| Week | Review focus |

|---|---|

| Weekly | Open roles, blockers, aging candidates |

| Monthly | KPI review, source quality, interview consistency |

| Quarterly | Process redesign decisions and role-family adjustments |

The goal at day 90 isn’t perfection. It’s control. The team should have a process it can explain, measure, and improve without rebuilding from scratch each time a hard role opens.

Frequently Asked Questions About Hiring Process Improvement

Should every role use the same hiring process

No. The core operating principles can stay consistent, but the sourcing channels, assessments, and interview criteria should change by role family. Specialized technical and quant roles need tighter calibration than broad generalist hiring.

What if hiring managers resist structure

Resistance usually drops when the structure removes duplicate interviews and speeds up decisions. Keep the change practical. Ask for fewer opinions and better evidence.

How many interview stages are too many

The answer depends on the role, but most bloated processes show the same flaw: multiple people assessing the same thing. If a round doesn’t test a distinct competency or move the decision forward, it should be removed.

What’s the fastest way to improve hire quality

Define the role more sharply, use a scorecard, and force written evidence in every debrief. Those three changes usually improve decision quality faster than adding more interview rounds.

How should candidate experience be measured

Use short post-process surveys for interviewed candidates and look for patterns in confusion, delays, and interviewer alignment. Keep the questions brief so the team can review responses consistently.

Nexus IT Group supports employers hiring for specialized technology roles across software, cloud, cybersecurity, data, leadership, and quant recruiting. Teams that need outside help diagnosing a stalled funnel, tightening interviews, or running a confidential search can explore nexus IT group for direct placement, contract staffing, executive search, and practical hiring guidance.