AI engineer salary in us is high by any standard in 2026. The national average base salary is $184,757, and average total compensation reaches $211,243 when additional cash compensation is included.

That headline number matters less than many organizations think. Decision points sit underneath it: where the role is based, what level of autonomy the engineer brings, whether the offer is base-heavy or equity-heavy, and which skills the market is rewarding right now. For CTOs, that determines whether an offer closes quickly or sits open while product timelines slip. For candidates, it determines whether an offer that looks strong on paper holds up after tax, cost-of-living, and upside are taken into account.

Raw benchmarks stop being useful on their own in this context. A board-level hiring plan needs to know when to pay for senior autonomy, when to hire one level down, and when equity is a legitimate offset versus a weak substitute for cash. A senior candidate needs to know whether the negotiation should focus on base, remote benchmarking, certification advantages, or long-term upside.

Table of Contents

- What Is the Average AI Engineer Salary in 2026

- The National and Regional Salary Landscape

- Salary Ranges by Experience Level From Entry to Senior

- Understanding Your Total Compensation Package

- How Specializations Affect Your Earning Potential

- A Hiring Manager’s Guide to AI Talent Acquisition

- Key Takeaways for Candidates and Employers

What Is the Average AI Engineer Salary in 2026

In 2026, AI engineering sits near the top of the U.S. technical pay market. National benchmarks cited earlier put average base salary at roughly the mid-$180,000s, with total compensation materially higher once bonus and other cash components are included. The practical takeaway is clear. Companies are paying a premium for engineers who can ship, scale, and maintain production AI systems, not for candidates with surface-level model familiarity.

That distinction matters in budgeting and negotiation. A national average is only a starting point because AI pay is shaped by delivery scope, revenue impact, and how close the role sits to production infrastructure. An engineer building retrieval pipelines, inference services, or agent orchestration for customer-facing products will usually command a different package than a candidate focused on experimentation or internal prototypes.

For candidates, this changes how to read an offer. Compare base, bonus, equity, signing incentives, and expected scope before deciding whether a package is strong. For employers, it changes how to build pay bands. Treating an AI engineer requisition as a standard software role often leads to slow searches, failed closes, or expensive re-hiring when the first hire lacks deployment depth.

The stronger benchmark is replacement cost. If a company saves on salary but adds three months of vacancy, slips a product launch, or relies on contractors to stabilize production, the cheaper hire was not cheaper.

Compensation discipline also requires adjacent-role context. A candidate who could credibly compete for ML platform, data engineering, or applied science work will benchmark across categories, not just titles. Reviewing a broader data science salary guide helps separate where AI engineering should sit relative to neighboring technical bands.

That cross-functional comparison is becoming more important as employers compete with both domestic AI teams and adjacent global talent pools, including global business intelligence roles. The board-level implication is straightforward. AI compensation should be set as a product and infrastructure investment decision, especially for mid-market firms and startups that cannot outspend Big Tech and therefore need sharper role design, faster interview cycles, and a more credible total-comp pitch.

The National and Regional Salary Landscape

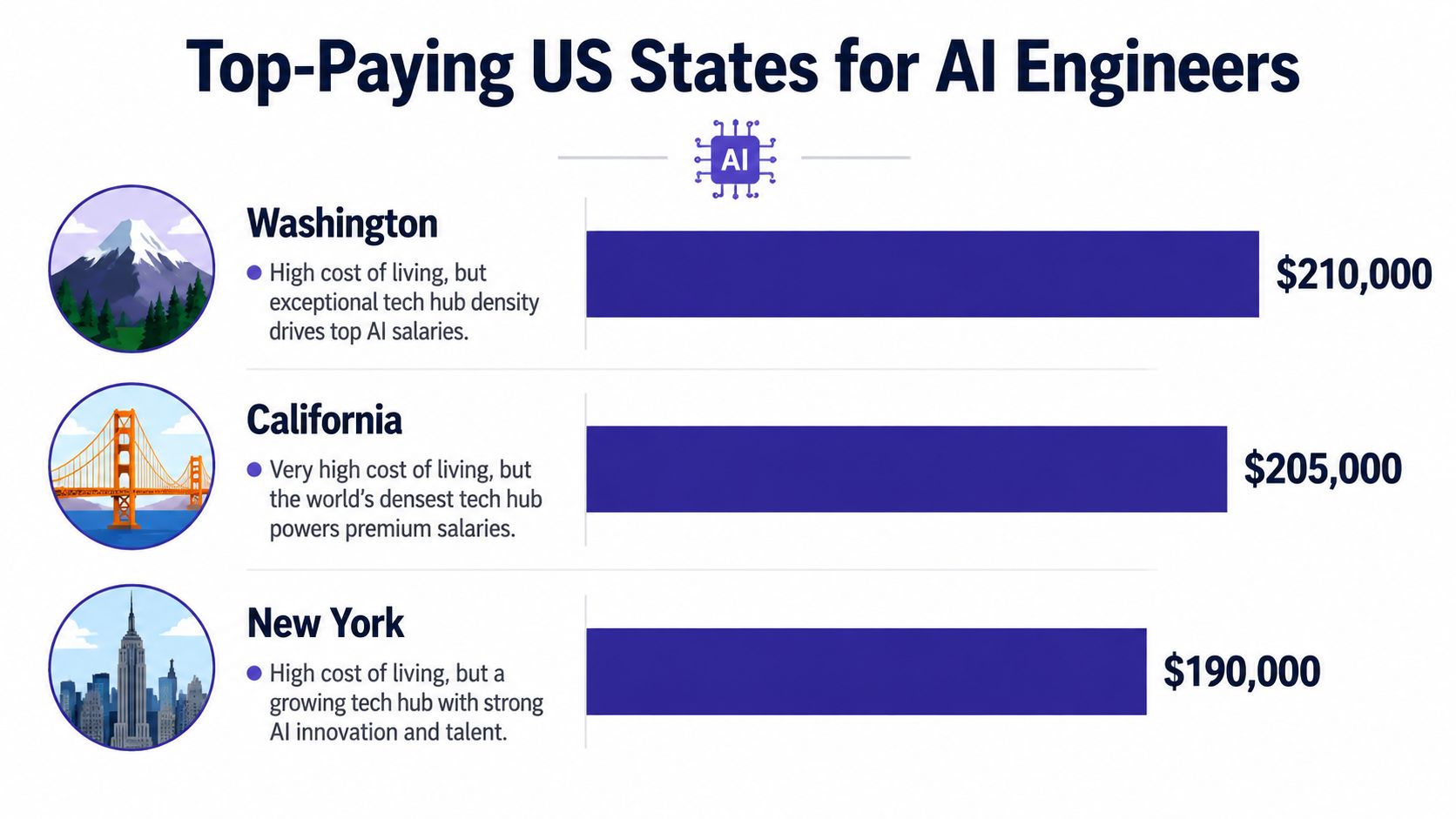

State differences still shape ai engineer salary in us. As noted earlier, the highest-paying states cluster around the same three hubs: Washington, California, and New York. That pattern matters less as trivia than as a pricing signal. Employers in those markets are not only paying for technical skill. They are paying for tighter competition, faster offer cycles, and candidates with multiple adjacent options in platform engineering, applied ML, and data infrastructure.

Why Washington, California, and New York continue to set the market

Washington holds unusual appeal because high salaries meet favorable tax treatment. For candidates, that improves real purchasing power. For employers, it explains why Seattle-area compensation often stays aggressive even outside Big Tech. A company recruiting there is competing against firms that can offer both strong pay and a better net-income equation.

California remains the price-setting market for AI hiring. Many compensation committees still anchor to Bay Area benchmarks even when the role sits elsewhere. That creates a predictable problem for mid-market companies. If they copy California base pay without matching scope, equity upside, or technical brand, they often overpay for the wrong profile or lose late in the process to better-known firms.

New York competes differently. Its draw is not tax efficiency. It is concentration of enterprise AI budgets across finance, media, advertising, and transformation programs. Candidates who want exposure to revenue-linked AI work, client-facing deployment, or regulated environments often accept that tradeoff. Employers hiring there should expect candidates to compare roles on business visibility and strategic scope, not just base salary.

How to use regional pay data in real decisions

Regional pay data is useful only if it changes the hiring plan or the negotiation strategy.

- For candidates: Compare offers on net outcome, promotion path, and equity quality. A lower-tax market can produce a stronger financial result than a nominally bigger offer in a higher-cost state. The better question is what you keep, what you own, and what scope you gain.

- For remote employers: National averages are a weak defense for discounting offers. Strong AI engineers know they can interview across state lines. If a remote role pays below top-quartile market rates, the company usually needs to compensate with faster advancement, clearer ownership, or meaningful equity.

- For mid-market firms and startups: Regional strategy should start with access-to-talent per dollar, not prestige. Secondary markets can work if the role is tightly defined and the interview process is fast. Teams assessing crossover compensation from adjacent analytics and engineering functions often review global business intelligence roles to calibrate where AI hiring should sit relative to broader data talent.

The practical conclusion is simple. The best state for candidates is not always the one with the highest quoted salary, and the best hiring market is not always the one with the biggest brand density. Candidates should optimize for after-tax compensation and career signal. Employers should optimize for close rate, retention risk, and output per compensation dollar.

Salary Ranges by Experience Level From Entry to Senior

Experience remains the cleanest predictor of pay in ai engineer salary in us. The 2026 progression data shows average salaries at $121,513 for 1 to 3 years, $138,185 for 4 to 6 years, $155,008 for 7 to 9 years, $172,361 for 10 to 14 years, and $185,709 for 15+ years, based on 2026 experience benchmarks. That same benchmark notes that senior engineers in Big Tech can exceed $300,000 total compensation, while Anthropic software engineers reached $600,000 median total compensation in May 2026.

How the market prices autonomy

Experience isn’t valuable because of time served. It matters because employers are buying different levels of independence.

An engineer in the 1 to 3 year range is usually priced for execution. That often means model tuning, data preparation, experimentation support, and implementation under guidance. The salary reflects potential and technical foundation, not end-to-end ownership.

The 4 to 6 year range often marks the transition from contributor to owner. At that level, employers tend to expect more reliable production work, stronger code quality, and better judgment around deployment choices.

By 7 years and above, the market starts paying for autonomy. These engineers are usually expected to make architectural decisions, productionize models, handle tradeoffs around performance and reliability, and work across engineering, platform, and product teams without heavy supervision.

Senior pay isn’t a reward for tenure. It’s a premium for reducing management load and technical risk.

AI Engineer Salary by Experience Level 2026

| Experience Level | Years of Experience | Average Base Salary Range | Average Total Compensation Range |

|---|---|---|---|

| Entry Level | 1 to 3 years | $121,513 | Qualitatively lower than senior packages, with structure varying by employer |

| Mid Level | 4 to 6 years | $138,185 | Often rises with bonus or equity depending on company type |

| Senior | 7 to 9 years | $155,008 | Can exceed $300,000 total comp in Big Tech |

| Lead | 10 to 14 years | $172,361 | Typically above mainstream packages when scope includes architecture and leadership |

| Principal and above | 15+ years | $185,709 | Can stretch much higher in frontier or elite firms |

The spread at the top matters more than the progression itself. Once a candidate reaches senior and lead levels, title alone stops explaining pay. Employer tier, production depth, and specialization take over. That’s why a candidate with a plain-sounding title can still command more than a nominally more senior peer if the work includes shipping LLM systems, PyTorch-based production pipelines, or core platform ownership.

For hiring managers, the key mistake is compressing bands. If a company prices entry, mid, and senior talent too closely, the strongest engineers will read that as a sign that leadership doesn’t understand the role. Candidates should read it the same way.

Understanding Your Total Compensation Package

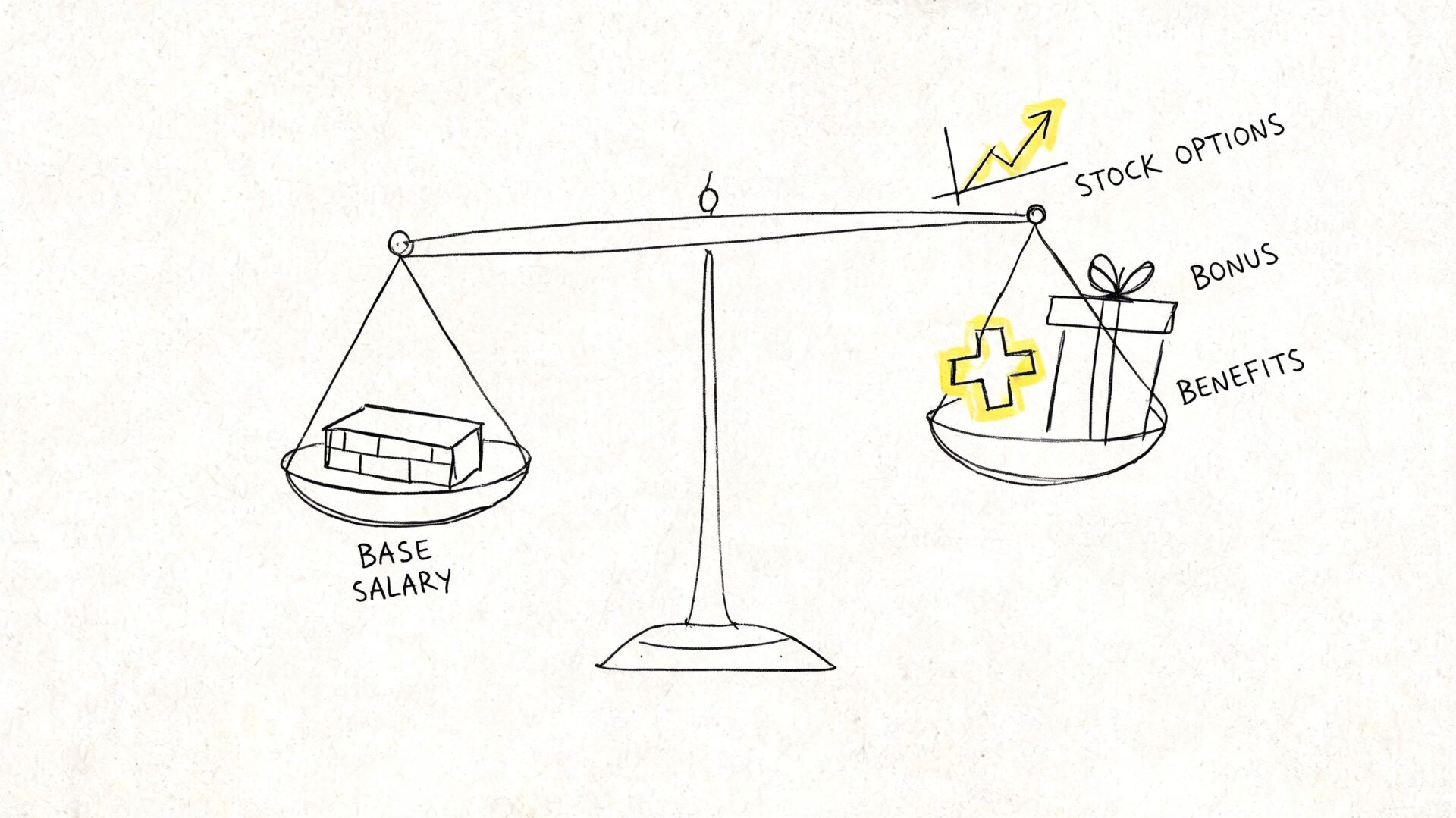

A strong AI offer isn’t just a base salary. Compensation structure changes the total value of a role, especially when a candidate is choosing between a large enterprise and a startup.

Why base salary alone is the wrong lens

The most useful contrast in the market is this one: FAANG-level firms average $244,800 total compensation for ML/AI engineers, while startups often offer 20% to 30% lower base salaries at $140k to $160k versus $185k+ at enterprises, according to 2025 to 2026 compensation comparisons. The same source notes that equity can offset part of that difference, with private equity-backed firms offering RSUs or options that can equal $50k to $100k annually for senior roles.

That creates two very different offer profiles. Enterprise packages tend to be more cash-forward and easier to value. Startup packages may look weaker on base and become more compelling only if the equity structure is credible.

Candidates who need a practical negotiation framework can adapt the compensation principles in this guide on how to negotiate salary effectively.

How startup and enterprise offers differ

A candidate evaluating two offers should ask four direct questions.

- How much is guaranteed cash: Base salary and bonus matter most when income stability is the priority.

- What kind of equity is being offered: RSUs at a mature company and options at an earlier-stage startup are not interchangeable.

- What has to go right for the upside to matter: Equity only bridges a lower base when the business case and timing are believable.

- What does the role control: A smaller company can justify some cash tradeoff if the engineer gets wider system ownership and visible impact.

The right comparison isn’t base versus equity. It’s certainty versus upside.

For employers outside Big Tech, this leads to a sharper strategy. They don’t need to mimic the total cash profile of elite firms if they can explain upside clearly and pair it with meaningful scope. But they do need honesty. Knowledgeable candidates can spot decorative equity quickly, and they discount it hard when the company can’t explain valuation, vesting logic, or probable liquidity path.

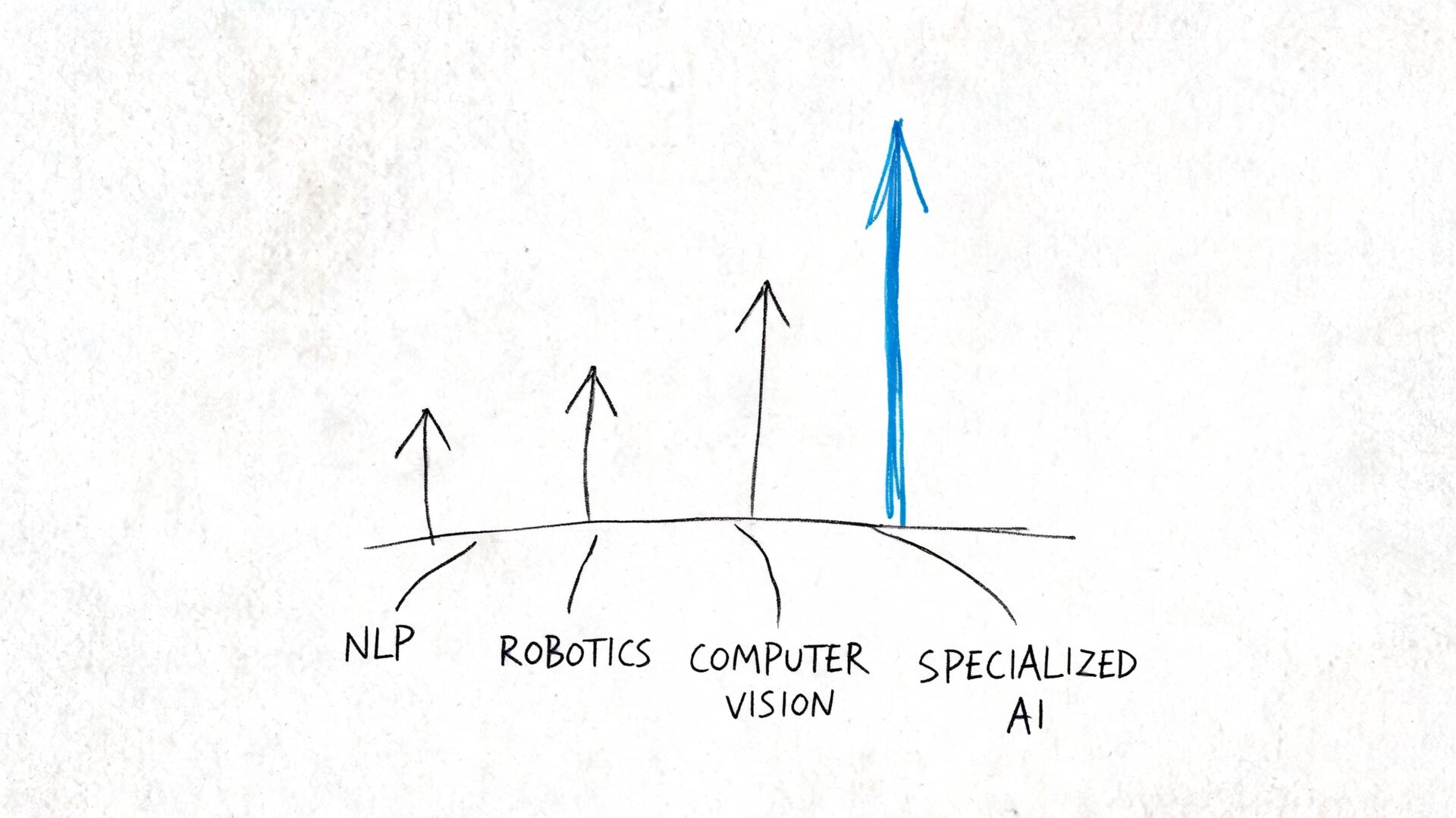

How Specializations Affect Your Earning Potential

Not every AI skill commands the same premium. The market is increasingly paying for combinations that solve hard business constraints, not for broad claims of “generative AI experience.”

The skills that command real premiums

The clearest data point is credential-linked. Google Professional ML Engineer certification holders earn 15% more, with $165k base versus $143k, while generative AI skills produce only a 5% to 8% premium, and cybersecurity-AI overlap can push total compensation above 25% to $210k+, according to specialization and certification salary data.

That comparison matters because it cuts through market noise. “Generative AI” as a label has become broad enough that it no longer guarantees strong differentiation on its own. Employers know many candidates can prompt an API. Far fewer can secure AI workflows, manage regulated data exposure, or deploy AI safely inside existing enterprise controls.

The same logic applies to niche combinations such as federated learning, AI ethics in regulated sectors, and cloud-AI hybrid roles. Even when every subdomain isn’t quantified in public benchmarks, the hiring pattern is clear: scarce skills tied to risk, compliance, or production reliability tend to convert into stronger compensation.

What that means for career planning and hiring

For candidates, the earning strategy is narrower than it looks.

- Build proof, not slogans: PyTorch, production ML systems, cloud deployment, and security integration carry more weight than generic AI branding.

- Use certifications selectively: A respected certification can work when it validates applied capability. It won’t rescue a weak project track record.

- Prioritize scarce intersections: Security plus AI, cloud infrastructure plus AI, and regulated-domain AI tend to create stronger negotiation power than generalist positioning.

For employers, specialization changes sourcing strategy.

A hiring team that writes every req as “AI engineer” will attract volume and create screening noise. A team that specifies model deployment, enterprise integration, or cybersecurity-adjacent AI work will attract a narrower and more qualified pool. That usually improves hiring efficiency faster than increasing broad top-of-funnel activity.

The best-paid candidates often look less flashy on paper. Their value sits in the hard parts of deployment, governance, and systems integration.

A Hiring Manager’s Guide to AI Talent Acquisition

Senior AI hiring has a market threshold, and ignoring it gets expensive. The strongest signal in current benchmarks is the compensation floor attached to time-to-fill.

The senior hiring threshold that changes recruiting speed

Organizations that fail to meet the $200,000 base salary floor for senior AI engineers in 2026 face an average time-to-fill of 114 days, which is 40% to 50% longer than competitive offers, according to 2026 AI engineering salary benchmarks. The same benchmark reports that remote senior roles now have a median of $206,600, anchored to national tech-hub standards.

That changes the economics of “saving money” on compensation. A below-market offer isn’t just a lower salary line. It can create four months of vacancy, delayed implementation, and rework for adjacent teams carrying the gap.

For companies hiring into core platform, product, or infrastructure roadmaps, that tradeoff rarely works in their favor. A delayed senior AI hire can slow multiple streams at once because these roles often sit at the intersection of architecture, deployment, and cross-functional execution.

A practical pay-band framework

A board or CTO building a realistic offer strategy can use a simple decision model.

- If the role requires senior autonomy: Budget above the market floor. That means a candidate can operate on production systems, make architecture decisions, and integrate AI into enterprise environments without constant oversight.

- If the budget can’t support that level: Re-scope the role toward mid-level execution and support it with stronger internal leadership.

- If the role is remote: Benchmark against national hub competition, not only local salary history.

- If the process is slow: Compensation may not be the only issue, but under-budgeting and over-interviewing often appear together.

A sample senior AI engineer pay band should describe scope as clearly as pay. Employers looking for practical support on role calibration and search strategy often use specialized AI engineer recruiters and staffing support when internal teams need faster access to qualified candidates.

A company doesn’t need to pay frontier-lab rates to hire well. It does need to know the difference between a stretch offer and a non-credible one.

The deeper conclusion is this: compensation is a filtering mechanism. It tells the market how serious the company is, how well it understands AI execution, and whether the role has board-level backing. Candidates interpret all three.

Key Takeaways for Candidates and Employers

AI engineer pay in the US has spread far enough across level, specialty, and company type that a single average is no longer a useful decision tool. The practical question is who captures value from that spread. Candidates who can tie their work to production outcomes usually command stronger offers. Employers who define scope, compensation mix, and interview design with precision usually fill roles faster and with less rework.

That is why salary data matters only when it changes behavior. For candidates, it sharpens negotiation. For employers, it improves role design, offer credibility, and close rates.

Candidates entering a competitive interview loop can improve their position by pairing salary benchmarks with clear business-case storytelling and targeted practice through an AI interview prep tool. That matters most when interviews test judgment on deployment, integration, tradeoffs, and failure handling rather than model theory alone.

For candidates

- Benchmark the role, not the headline number: Compare offers by seniority, geography, and technical scope. An ML platform role, an LLM application role, and an MLOps-heavy role may carry very different pay ceilings even under the same title.

- Model total compensation in cash terms first: Base salary drives immediate income. Equity matters only if the grant size, dilution risk, vesting terms, and company trajectory are credible.

- Negotiate from execution risk removed: Hiring teams pay more for candidates who can reduce time-to-production, improve model reliability, and work across engineering, data, and product without heavy supervision.

- Use specialization carefully: Scarcity pays when it maps to a business need. Domain depth in infrastructure, applied NLP, recommendation systems, or regulated environments often has more pricing power than broad generative AI messaging.

For employers

- Set compensation after defining the operating level: If the company needs an engineer who can ship production AI systems with limited oversight, the offer must match that requirement. If budget is tighter, narrow the scope and add support.

- Benchmark against your actual competition set: A startup in a mid-cost market still competes with remote-first employers and larger firms for proven AI talent. Local salary history is often the wrong reference point.

- Audit title, scope, and pay for consistency: A senior title attached to mid-level responsibilities can work. A senior title attached to senior responsibilities with mid-level pay usually fails in the market.

- Treat compensation as part of delivery economics: Underpaying a hard-to-fill AI role can look efficient on paper and become expensive through vacancy time, slower launches, contractor spend, and missed product timelines.

The strongest hiring strategies now come from firms that translate salary data into a full offer thesis. Mid-market companies and startups rarely win by copying Big Tech cash packages. They win by being explicit about scope, decision rights, equity realism, manager quality, and speed of execution.

For candidates, the same principle applies in reverse. The best offer is not always the highest base. It is the package where compensation, authority, technical challenge, and company trajectory line up with the next two to four years of career capital.

nexus IT group helps employers hire hard-to-find technology talent and helps experienced candidates manage high-stakes career moves across AI, cloud, cybersecurity, data, and software engineering. Teams that need a recruiting partner with deep technical market knowledge can connect with nexus IT group.