90% of hiring managers report difficulty sourcing skilled candidates in a tight talent market, according to Recruiterflow’s recruitment statistics roundup. That number reframes sourcing for recruitment. It isn’t a side task for junior recruiters. It’s the top-of-funnel work that decides whether a hiring team gets a credible shortlist or spends weeks reviewing noise.

In hard-to-fill tech hiring, the difference between average and strong sourcing shows up early. Weak sourcing starts after the requisition opens, leans too hard on job boards, and treats volume as progress. Strong sourcing starts before the role becomes urgent, maps adjacent talent pools, and builds contact lists that can convert when the business is ready to move.

That matters most in AI, cybersecurity, cloud, DevOps, and quant hiring. These candidates rarely sit in one channel, and they usually aren’t waiting to apply. Good sourcing for recruitment finds evidence of capability where that work shows up: code repositories, technical communities, alumni networks, referral loops, meetups, conference rosters, niche forums, and prior finalist pools inside the ATS.

Table of Contents

- Why Sourcing Matters More Than Ever in 2026

- The Recruiter’s Toolkit Core Sourcing Channels and Techniques

- Specialized Sourcing Playbooks for Tech’s Toughest Roles

- Mastering Candidate Outreach and Engagement

- Building and Managing Your Talent Pipeline

- Measuring What Matters Sourcing KPIs and Reporting

- Sourcing Pitfalls Compliance and Diversity Strategies

Why Sourcing Matters More Than Ever in 2026

Hard-to-fill technical roles rarely fail because recruiters cannot post a job. They fail because the team starts too late, targets too broadly, and treats sourcing like an admin task instead of a pipeline discipline.

For generalist hiring, inbound flow can carry more of the load. For AI research, quant development, cloud security, or offensive security hiring, that approach breaks down fast. The qualified population is smaller, overlap between competing employers is high, and many strong candidates are not applying. They are shipping code, publishing papers, tuning models, running incident response, or already fielding outreach from three other firms.

That changes how recruiting should operate. By the time a req opens, a strong team should already know the target companies, adjacent backgrounds, likely compensation pressure, and where the talent spends time. If that work starts after intake, time-to-fill expands before the first message goes out.

I usually frame sourcing as a business input, not a recruiting activity.

Done well, it reduces wasted screens, improves shortlist quality, and gives hiring managers a clearer view of the market before they reject realistic talent. In niche tech hiring, those gains show up in metrics leaders care about. Fewer stalled searches. Faster calibrated pipelines. Better quality of hire six months after start date because the slate was built around real capability signals, not just resume keywords.

The mechanics look a lot like disciplined outbound. That’s one reason recruiting teams can borrow useful ideas from ReachInbox’s lead generation insights. Targeting, sequencing, personalization, and follow-up all affect response rates. The difference is that recruiter outreach has to account for career risk, compensation context, and technical credibility.

Practical rule: If sourcing begins only after headcount approval, the team has already given up speed.

Three sourcing modes matter in 2026:

- Applicant-driven recruiting fills roles that already have enough market visibility and candidate supply.

- Proactive sourcing reaches qualified people before they enter the market, which is often the only way to build a viable slate for AI, quant, and cyber roles.

- Strategic sourcing creates reusable assets. Market maps, calibrated target lists, prior outreach history, and warm relationships that shorten future searches.

The distinction matters because each mode produces different outcomes. A generic sourcing motion may be enough for a mid-level software engineer search. It is rarely enough for an LLM infrastructure engineer, a low-latency C++ quant, or a cloud detection engineer with hands-on SIEM depth. Those searches need role-specific pipelines built around actual evidence of fit, and the tools need to support that work. A stack built for search quality, tracking, and outreach consistency matters more than another posting channel. Teams reviewing their stack can compare options in this guide to recruitment tools for modern hiring teams.

Sourcing now carries senior-level weight because it decides whether the hiring process starts with signal or with guesswork. In difficult tech markets, that choice has a direct effect on time-to-fill, offer acceptance, and the quality of people who make it into the process at all.

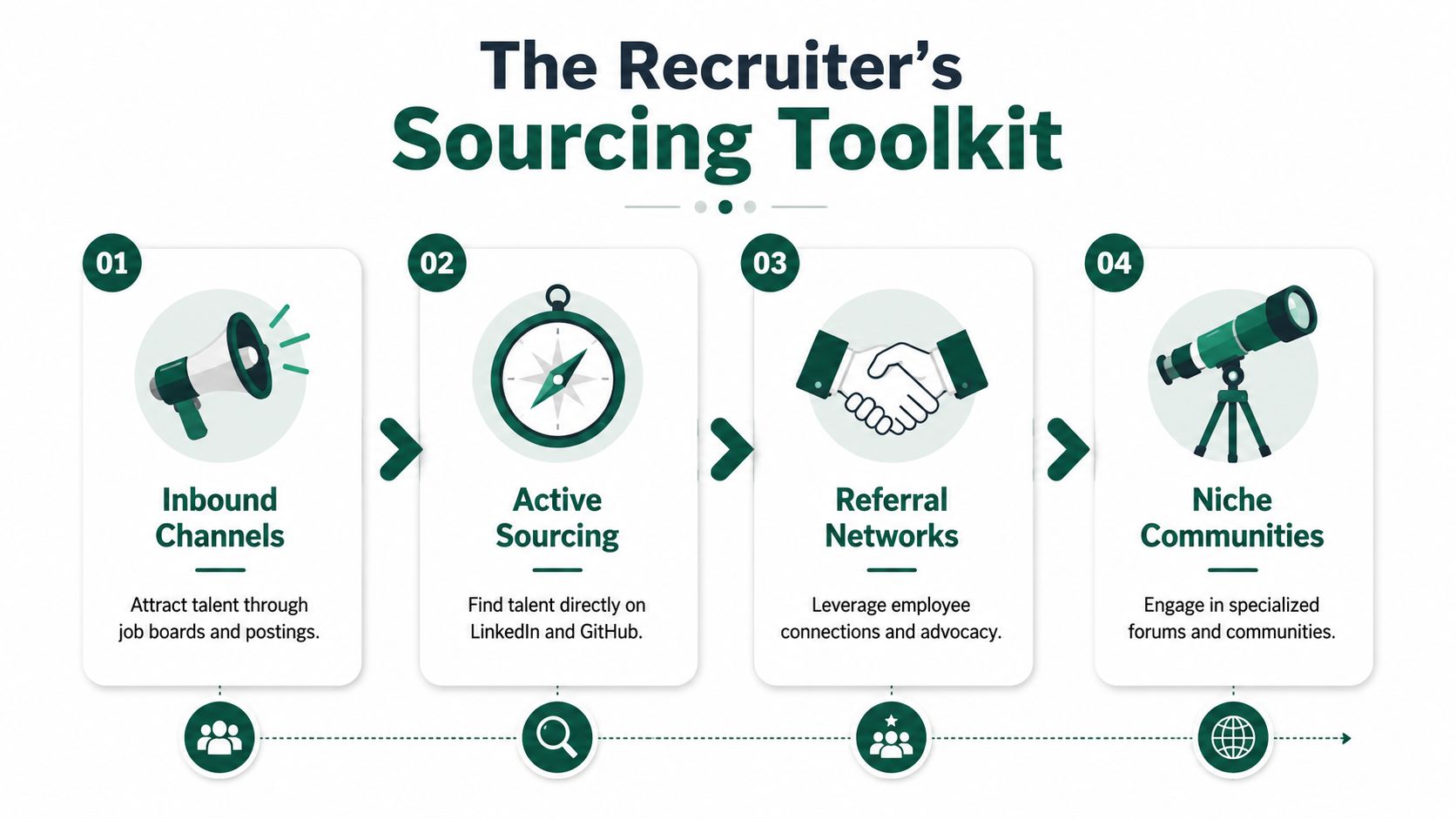

The Recruiter’s Toolkit Core Sourcing Channels and Techniques

A strong sourcing workflow uses multiple channels on purpose. The goal isn’t to touch every platform. It’s to use the right platform for the signal needed.

Start with channels that show actual signal

LinkedIn Recruiter is still a core tool because it’s efficient for company filtering, title patterns, geography, and recent career movement. It’s useful at the start of a search, especially when the hiring manager hasn’t clarified target companies or candidate adjacencies. AIHR notes that LinkedIn is used by 77% of recruiters in the context of sourcing metrics and channel effectiveness in its recruiting metrics guide.

GitHub works differently. It’s less useful for polished profile summaries and more useful for evidence. For software, platform, DevOps, and security roles, repositories, contribution patterns, starred projects, issue participation, and tooling choices can say more than a resume.

Dice and similar technical job boards still have value, mostly for active candidates and for markets where contract hiring is common. They’re not ideal as a standalone strategy for niche talent, but they can fill gaps when a team needs candidates who are available now.

Referral networks deserve more structure than they typically receive. A referral program isn’t just an internal announcement asking for names. It works when recruiters tell employees exactly what kind of background, environment, and stack they’re targeting.

Offline channels also matter. Conference attendee lists, meetup speakers, alumni directories, open-source maintainers, hackathon participants, and professional associations often surface people who won’t respond to generic sourcing on a crowded platform.

Good sourcers don’t ask, “Where can this role be posted?” They ask, “Where does this type of person spend time when they’re doing the work?”

Use Boolean well or don’t use it at all

Boolean isn’t obsolete. Bad Boolean is.

Most weak searches fail for one of two reasons. They’re too loose, so the recruiter gets a flood of irrelevant profiles. Or they’re too narrow, so strong adjacent candidates disappear.

A few practical rules help:

- Use synonyms aggressively. Titles vary more than recruiters expect. “Site Reliability Engineer,” “SRE,” “Platform Engineer,” and “Production Engineer” can overlap.

- Search for capability, not only titles. Tools, environments, and problem domains often matter more than job labels.

- Exclude obvious noise carefully. The NOT operator is useful, but recruiters often overuse it and remove adjacent talent by accident.

- Build location logic deliberately. Remote doesn’t mean anywhere. It often means a defined region, time zone, or work authorization pattern.

Here’s a simple working reference.

| Goal | Example Boolean String |

|---|---|

| Find backend engineers with cloud experience | (“backend engineer” OR “software engineer” OR “backend developer”) AND (Java OR Golang OR Python) AND (AWS OR Azure OR GCP) |

| Find DevOps or SRE talent | (“site reliability engineer” OR SRE OR “devops engineer” OR “platform engineer”) AND (Kubernetes OR Terraform OR “CI/CD”) |

| Find data scientists with production ML exposure | (“data scientist” OR “machine learning engineer”) AND (Python OR PyTorch OR TensorFlow) AND (MLOps OR deployment OR production) |

| Find security engineers in cloud environments | (“security engineer” OR “cloud security engineer” OR “application security engineer”) AND (AWS OR Azure OR GCP) AND (IAM OR SIEM OR detection OR threat) |

| Find quant developers with low-latency backgrounds | (“quant developer” OR “software engineer”) AND (C++ OR Python) AND (“low latency” OR trading OR market OR exchange) |

Treat tools as workflow multipliers

Channel choice matters, but workflow discipline matters more. Data-driven sourcing works when teams compare which channels produce quality hires rather than just activity. That’s the core point in iCIMS’ guide to data-driven sourcing strategy. A high-volume source that produces weak pass-through isn’t helping.

A few tools support that workflow well:

- Search and discovery tools. LinkedIn Recruiter, GitHub, Kaggle, and role-specific communities.

- Parsing and enrichment tools. A structured resume parser can help teams normalize inbound resumes and older ATS records so reusable candidates don’t stay buried in attachment-heavy databases.

- Recruiting systems. ATS and CRM tools become more valuable when recruiters tag for skills, seniority, stack, interview outcome, and re-engagement timing.

- Reference stacks. For teams evaluating software and workflow options, this roundup of recruitment tools is a useful starting point.

One additional option sits between internal recruiting and broad agency usage. Nexus IT Group works on hard-to-fill IT roles across areas like cloud, cybersecurity, data science, DevOps, and quant recruitment, which makes it relevant when an internal team needs specialized market reach rather than generic applicant flow.

Specialized Sourcing Playbooks for Tech’s Toughest Roles

Generic sourcing logic breaks down in specialized searches because the evidence of fit changes by domain. A machine learning engineer, a cloud architect, and a quant developer may all look “technical” on paper, but the sourcing signals aren’t the same.

For niche technical hiring, skills assessment also matters earlier than is commonly assumed. Alpha Apex Group’s tech sourcing guidance notes that advanced tools can combine GitHub or Kaggle-style artifact discovery with skills assessments to reduce false positives and shorten interview-to-hire timelines.

AI and machine learning hiring

AI hiring goes wrong when recruiters collapse research, data science, applied ML, and production ML engineering into one req. These are often different profiles.

Useful sourcing signals include:

- Research-facing candidates. arXiv publications, conference presence, deep model specialization, experimentation depth.

- Applied ML engineers. Production deployment work, model serving, feature pipelines, inference optimization.

- LLM and gen AI practitioners. Retrieval systems, vector databases, evaluation pipelines, prompt orchestration, serving infrastructure.

A profile with many notebooks but little evidence of deployment may fit research or prototyping roles better than production engineering. A candidate with strong backend systems work and some ML deployment might be a better hire for an ML platform role than a pure data scientist.

Cloud and DevOps hiring

For cloud and DevOps roles, the work usually leaves fingerprints in infrastructure choices. GitHub repositories, toolchain references, architecture talks, and platform community activity often show more than keyword-heavy resumes.

Good searches look for:

- Terraform, Kubernetes, Helm, GitHub Actions, ArgoCD, or similar tooling

- Signals of operating production systems, not only building them

- Experience with observability, incident response, and reliability ownership

- Adjacencies such as SRE, platform engineering, release engineering, or infrastructure software

A platform engineer from a SaaS business may convert better into an SRE role than a candidate whose resume says “DevOps engineer” but reads like general systems administration.

A title match is useful. A work-pattern match is better.

Cybersecurity hiring

Cybersecurity sourcing requires a tighter distinction between specialty areas. “Security engineer” is often too broad to be useful.

The recruiter should decide which lane matters most:

- Cloud security

- Application security

- Detection and response

- GRC and compliance-heavy security

- Identity and access management

- Offensive security and red team

- Security operations leadership

GitHub can help in appsec and security tooling. Community conference participation can help for offensive security and research-heavy profiles. Prior employer context matters a lot. Someone from a regulated environment may be stronger for healthcare or government-linked searches than a candidate from a consumer startup, even if both share similar keywords.

Data science and analytics hiring

Kaggle can surface candidates with strong modeling instincts, but competition results alone don’t prove business impact. The best sourced profiles show a mix of statistics, experimentation, communication, and production collaboration.

Useful distinctions include:

- Analysts moving toward data science

- Data scientists who mainly build models

- ML engineers who productionize models

- Analytics engineers who improve data accessibility and quality

For this family of roles, the handoff between sourcing and hiring manager calibration matters a lot. If the team wants experimentation rigor, SQL fluency, and stakeholder communication, the sourcer shouldn’t overload the funnel with pure research profiles.

Quant and fintech hiring

Quant hiring punishes generic searches fast. The same Boolean pattern used for backend engineering won’t capture the right market structure, academic pedigree, or strategy context.

Promising channels include quantitative finance communities, alumni pools from mathematically strong programs, fintech engineering networks, and forums where low-latency or trading systems practitioners spend time. The distinction between quant researcher, quant developer, and machine learning engineer in a trading environment also has to stay sharp.

A quant developer search may require evidence of performance-sensitive systems work. A quant research search may need stronger signals in probability, modeling, and experimentation. A machine learning role in a fund may demand both software depth and domain adaptability.

Mastering Candidate Outreach and Engagement

Most outreach fails before the candidate reads the second line. The message is too generic, too recruiter-centered, or too eager to schedule a call without earning attention.

Good sourcing for recruitment doesn’t stop at list building. The outreach has to show that the recruiter understands what the candidate does.

What strong outreach includes

A strong first message usually has five parts:

-

A subject line with signal

Keep it specific. Mention the domain, scope, or technical context instead of using vague career language. -

A real reason for reaching out

Mention a project, stack, domain, company pattern, or background detail that explains why this person made the list. -

A concise role thesis

Explain the challenge, not the full job description. Good candidates want to know the technical problem, team context, and why the work matters. -

A low-friction ask

Don’t force a full interview call in the first touch. Offer a short exploratory conversation or ask whether the role is relevant. -

Respectful tone

Senior engineers, security leaders, and quant candidates respond better when the recruiter sounds informed and direct, not overexcited or scripted.

For recruiters who want extra examples, this outreach book for tech recruiters is a useful reference.

Good message versus bad message

Weak outreach

Hi, your background looks great. I’m working on an amazing opportunity with a fast-growing company. It offers strong compensation and growth. Are you open to chatting this week?

This message fails because it could have gone to anyone. It gives no evidence of fit, no reason to trust the recruiter’s judgment, and no technical substance.

Stronger outreach

Hi [Name], reaching out because your background in Kubernetes and platform reliability at [Company] looks relevant to a team building internal developer infrastructure for a cloud-heavy environment. The role sits close to SRE and platform engineering, with ownership around deployment reliability and tooling. If that’s worth a quick conversation, happy to send more context first.

This version shows targeting discipline. It also makes the ask smaller.

Follow-up without turning into spam

Most candidates won’t respond to the first message. That doesn’t mean the target is wrong. It often means the timing is wrong or the first note landed in a crowded inbox.

A practical follow-up sequence usually works better when each touch adds something new:

- Second message. Add one useful detail about the team, architecture, or scope.

- Third message. Narrow the ask even further. Offer to share written context.

- Final touch. Close the loop politely so the candidate doesn’t feel trapped in an endless sequence.

Recruiters working passive talent should also study guidance on engaging passive job seekers, because the tone and pacing are different from outreach to active applicants.

Respect earns more replies than persistence alone.

Building and Managing Your Talent Pipeline

Most recruiting teams say they want a pipeline. What they have is a pile of old applicants, old notes, and old resumes that nobody can search cleanly.

A usable talent pipeline is organized around future retrieval. If a recruiter can’t find the right silver medalist for a related role six months later, the database isn’t a pipeline. It’s storage.

Build the pipeline around future use

The fastest way to improve sourcing efficiency is to tag candidates for how they might be reused, not just for the job they were tied to at the time.

Useful tags include:

- Core skill tags. Kubernetes, SIEM, PyTorch, Terraform, low-latency C++, data modeling.

- Role adjacency tags. Strong for SRE, possible appsec fit, future engineering manager, analyst-to-data-science bridge.

- Interview status tags. Screened, finalist, silver medalist, offer-ready, not now.

- Timing tags. Reconnect next quarter, after bonus cycle, after relocation, after contract end.

- Context tags. Compensation constraints, remote-only preference, regulated-industry background, leadership interest.

ATS discipline proves its worth. Reusable profiles should be easy to rediscover by stack, domain, location, seniority, and outcome. If the search team relies only on memory, the pipeline won’t scale.

Keep warm candidates warm

A pipeline decays when recruiters only touch candidates at the moment of need. Good pipeline management uses light, relevant re-engagement.

That can include:

- Short update emails when a related role opens

- Targeted check-ins around likely movement windows

- Market updates for niche communities or specialized functions

- Relationship notes so future outreach doesn’t feel like a cold restart

The key is relevance. Passive candidates don’t need weekly nurture campaigns. They need occasional contact that reflects their area of work and remembers prior conversations.

A strong pipeline also includes former finalists who weren’t selected for reasons unrelated to quality. Many searches get rebuilt from zero when the best next hire was already known by the team.

The ATS should help recruiters remember what the market already taught them.

That mindset changes sourcing for recruitment from repeated one-off effort into a compounding asset. Each search adds names, context, and pattern recognition that improves the next one.

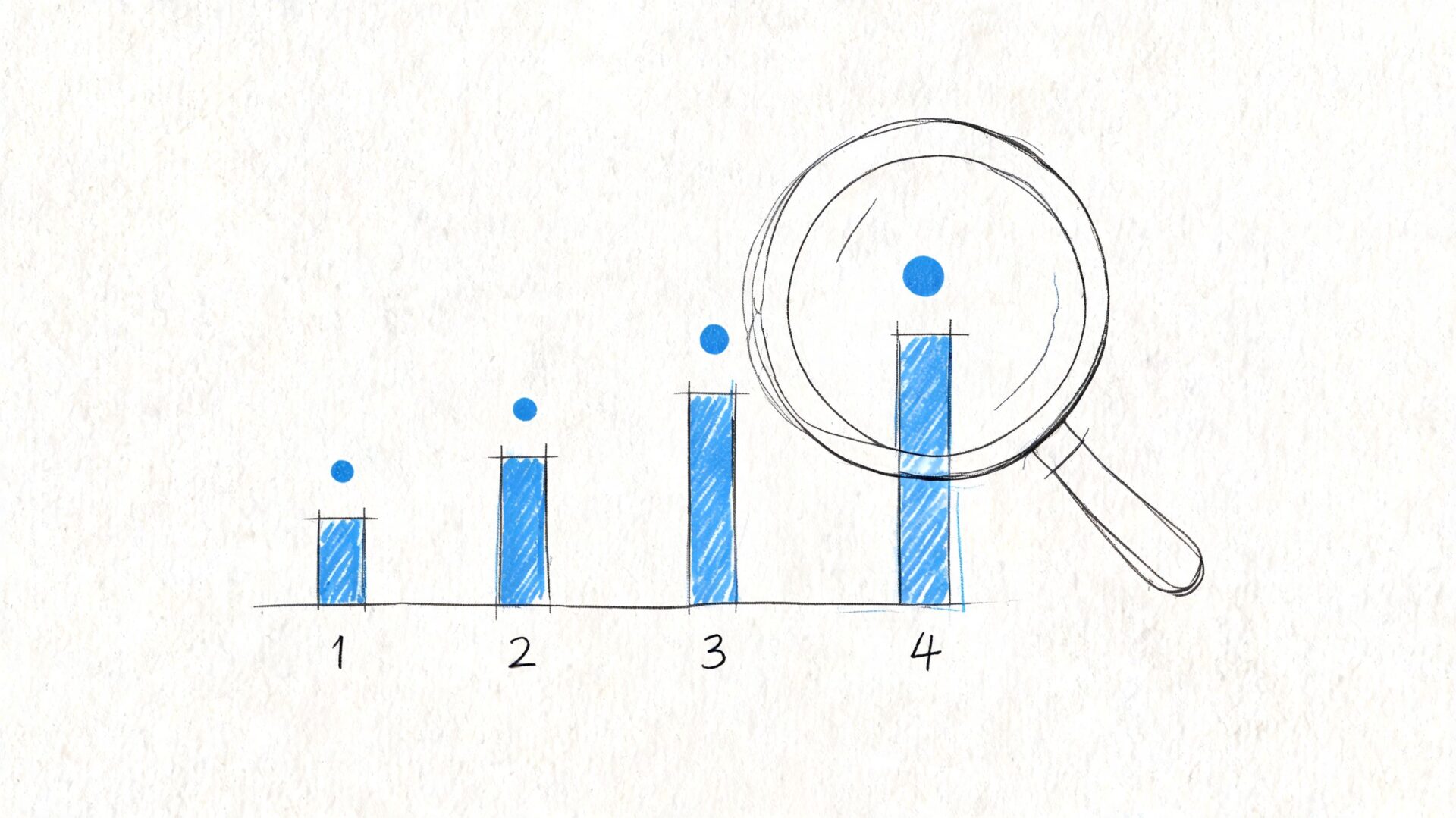

Measuring What Matters Sourcing KPIs and Reporting

For hard-to-fill tech roles, sourcing usually drives the result long before the interview loop starts. If an AI research search takes 45 days to produce a viable slate, or a cyber search generates replies from the wrong part of the market, time-to-fill was already pushed off course.

That is why sourcing reports need to connect recruiter activity to hiring outcomes. A dashboard full of messages sent and profiles viewed may show effort, but it does not help a hiring leader decide where to add budget, which channels to cut, or why one niche pipeline outperforms another.

The metrics that deserve dashboard space

Keep the dashboard small and role-specific. A generic report hides the differences between hiring a platform engineer, a quant researcher, and a cloud security architect.

Track these first:

- Time to first qualified slate. How long it takes to present candidates the hiring manager would screen.

- Channel-to-interview rate. Which sources produce candidates who reach recruiter screen, technical screen, and onsite.

- Outreach response rate by role family. Response rates vary widely across AI, quant, and cyber. Measure them separately.

- Submission-to-hire ratio. How many sourced candidates it takes to produce one accepted hire.

- Quality of hire by source. Performance at 90 or 180 days, hiring manager satisfaction, or retention, depending on what your team can measure reliably.

I also track pass-through rates between stages. They show where a sourcing problem ends and a selection problem begins.

For example, if GitHub sourcing produces strong recruiter screens for security engineering roles but weak hiring-manager pass-through, the issue may be calibration on architecture depth, not channel quality. If referrals convert well for quant hires but stall at offer stage, compensation or location may be the actual constraint.

Report by search type, not just by recruiter

This matters more in niche tech hiring than in generalist recruiting.

A sourcing team can look efficient in aggregate while missing the mark in the searches that cost the business the most. AI talent, low-latency engineering, detection engineering, and applied cryptography each require different channels, different outreach angles, and different market timing. Reporting should reflect that reality.

A useful reporting view compares performance across:

| Reporting cut | What it shows |

|---|---|

| Role family | Whether AI, quant, cyber, platform, or data searches need different sourcing plans |

| Source channel | Which channels create interviews, finalists, and hires |

| Recruiter or sourcer | Who is strongest at specific search types |

| Funnel stage | Where qualified candidates drop out |

| Hiring manager or business unit | Whether delays come from sourcing or from interview process friction |

This structure helps leaders make better decisions. If cyber roles fill fastest through community referrals and conference follow-up, budget should shift there. If AI research hires come mostly from re-engaged prospects and targeted outbound to labs, that pipeline deserves steady investment even if reply volume looks lower than LinkedIn outreach.

How to report sourcing in business language

Hiring leaders care about speed, quality, and risk. Reports should answer those points directly.

| Business question | Sourcing metric to show |

|---|---|

| How fast can we build a credible slate? | Time to first qualified slate |

| Which channels are worth budget for this role type? | Channel-to-interview and channel-to-hire rates |

| Are we reaching the right market segment? | Response rate and pass-through rate by role family |

| Where does the funnel actually break? | Stage conversion by source and by hiring team |

| Is sourcing improving hiring results? | Quality of hire, retention, and time-to-fill by source |

Use comments, not just charts. A short note such as "quant candidates are responding, but compensation is below hedge fund median" is more useful than a clean dashboard with no interpretation.

For teams trying to connect sourcing metrics with broader funnel issues, this guide on how to improve the hiring process is a practical reference. Sourcing performance only matters if screens are scheduled quickly, interview loops are calibrated, and offers close at a reasonable rate.

The goal is simple. Measure the sourcing inputs that improve time-to-fill and quality of hire, then build separate playbooks for the role families that drive the most hiring risk.

Sourcing Pitfalls Compliance and Diversity Strategies

A weak sourcing process adds days to time-to-fill long before anyone notices the funnel is off. In hard-to-fill tech hiring, the usual cause is not lack of effort. It is poor search design, inconsistent documentation, and narrow market coverage.

That shows up fast in AI, quant, and cybersecurity searches. A team can send hundreds of messages and still miss the right talent if the pipeline is built around the wrong titles, the wrong channels, or stale candidate data.

Search mistakes that undermine hard-to-fill hiring

The first failure is channel concentration. LinkedIn is useful, but it is rarely enough for niche technical hiring. Strong cryptography engineers may be easier to identify through research communities and conference trails. Quant developers often cluster in specific firms, alumni groups, and referral networks. Applied AI talent may be more visible through open-source work, published papers, Kaggle activity, or startup ecosystems than through a standard title search.

The second failure is literal intake conversion. Hiring managers often describe a person they have seen before, not the full range of people who can do the work. If the req says "Senior Machine Learning Engineer" but the underlying need is production model deployment on AWS, the pool should also include MLOps engineers, platform engineers with model-serving depth, and applied scientists who have owned inference systems in production.

The third failure is neglecting prior pipeline assets. Old finalist slates, silver medalists, past contractors, and declined offers often contain candidates who fit a new role with minor adjustments on scope, location, or compensation. Teams that ignore this data rebuild the market map from zero every time. That increases search cost and usually pushes time-to-fill out.

A better operating pattern looks like this:

- Build role-specific source maps. Define where AI, quant, cyber, and cloud talent shows up before outreach starts.

- Translate reqs into capabilities. Search for tooling, domain exposure, scale, and ownership, not just title matches.

- Rework archived talent pools. Revisit finalists and timing-based rejections before buying more top-of-funnel activity.

- Check search assumptions weekly. If reply rates are weak or screen pass-through is low, fix targeting first.

I have seen this cut wasted effort quickly. In cyber hiring, for example, title-only sourcing often pulls generic security analysts. Capability-based sourcing pulls people who have handled identity architecture, detection engineering, cloud incident response, or product security, which is what the hiring team usually needed in the first place.

Compliance discipline protects hiring quality

Compliance is not separate from sourcing quality. It is part of it.

Poor note-taking, inconsistent screens, and loose profile interpretation create risk for the company and confusion for the hiring team. They also make calibration harder because no one can tell why one candidate advanced and another did not.

Use a few rules every time:

- Document job-related reasons only. Notes should tie to required skills, relevant experience, certifications, scope, or domain context.

- Apply the same screening criteria across the slate. Specialized roles still need structured evaluation, even when backgrounds vary.

- Stay on job-relevant information. Public profiles can help verify work history or technical contributions, but personal attributes that are not relevant to the role should stay out of the process.

- Tighten process in regulated environments. Government, healthcare, defense, and heavily audited sectors usually require clearer documentation and approval steps.

This matters in reporting too. If a hiring manager asks why a search is stuck, the answer should be concrete. "Compensation is below market for this talent segment" is useful. "We are not finding enough quality" is not.

Diversity starts with market mapping

Diversity outcomes are shaped early, at the sourcing plan stage. If the target list includes the same companies, schools, cities, and title patterns every time, the pipeline will repeat itself.

The practical fix is to widen search logic without lowering the bar. For technical hiring, that usually means looking at adjacent backgrounds that build the same skills through different paths. A security engineer from a cloud infrastructure team may be a stronger fit than a candidate from a recognizable security brand with less hands-on depth. An AI engineer from a logistics company may have better production experience than a candidate from a famous lab with mostly research exposure.

Useful shifts include:

- Expand beyond default employers. Brand-name companies are one segment of the market, not the whole market.

- Use adjacencies on purpose. Focus on evidence of relevant work, not pedigree shortcuts.

- Add varied communities to the source mix. Alumni networks, technical associations, return-to-work groups, meetup communities, and specialized forums widen reach.

- Review outreach language for exclusion signals. Keep messages specific to business problems, technical scope, and impact.

The strongest diverse pipelines come from better search architecture and tighter intake discipline. They do not come from trying to repair a narrow slate at interview stage.

Sourcing for recruitment affects business outcomes directly. A well-built pipeline shortens time-to-fill, raises the odds of a credible finalist slate, and improves quality of hire because the market map is broader and the evaluation is cleaner.

Teams hiring for specialized IT roles often need more than extra resumes. They need a sourcing partner that understands technical adjacencies, passive candidate engagement, and how to build credible pipelines for hard-to-fill searches. nexus IT group supports employers across cloud, cybersecurity, AI, data, DevOps, software, and quant hiring with contract, direct placement, and executive search support.